AI models cite organized expertise. The experts who win across all three platforms structure their knowledge for low-energy retrieval. This article explains why the Authority Efficiency Principle decides who gets cited in the AI era.

Key Takeaways

The human brain runs on 20 watts of power and compresses millions of sensory bits into pattern recognition shortcuts every second.

Google shifted from keyword matching to entity understanding, rewarding sites with structured data and named frameworks over generic content.

Large language models retrieve answers from compressed training data and increasingly pull from structured external knowledge bases through retrieval augmented generation.

E-E-A-T is an energy evaluation framework that measures how much verification cost a system must spend to trust a source.

Kimberly Snyder went from 500 dollars per hour in celebrity kitchens to an 8-figure brand after restructuring her expertise into a Personal Media Company.

The Authority Efficiency Principle determines how far and how fast expertise scales across brains, search engines, and AI models.

How the Human Brain Reduces Retrieval Energy Through Compression

The human brain burns 20 watts of power and processes millions of sensory bits per second through compression. Conscious awareness captures a tiny fraction of that input. The rest gets chunked, patterned, and filed into shortcuts that skip full reprocessing.

A chess grandmaster does not scan 32 pieces on a board. They see five or six compressed patterns drawn from thousands of hours of play. A radiologist does not examine every pixel of an MRI. Decades of structured memory route them straight to the anomaly.

Compression is what lets a 20-watt organ outperform systems that burn thousands of watts on the same problem.

This is not a metaphor. It is thermodynamics. Information drifts toward disorder, and authority is what keeps it organized. Every biological, digital, and social system that processes information solves one problem. Maximum signal with minimum energy. The systems that solve it well survive, scale, and win.

How Human Societies Reduce Retrieval Energy With Authorities

Every human civilization has produced authorities, gurus, elders, priests, and specialists, because authority is an energy management strategy. A tribe that designates "listen to the person who already figured this out" survives better than one that does not.

The alternative is forcing every member to learn everything from scratch. That is not a social construct. That is information thermodynamics applied to human survival.

Trust is a biological shortcut. When a patient listens to a doctor, the brain skips the verification steps required to audit the diagnosis. The shortcut is expensive to build and cheap to reuse. Every accurate delivery from a trusted source lowers the cost of relying on them again.

Authority compounds because the brain files reliable sources as low-friction retrieval paths. Over time, the expert becomes the default route to truth in their domain. That compounding is what separates credentials from authority in the creator economy.

How Google Evolved to Reward Structured Authority

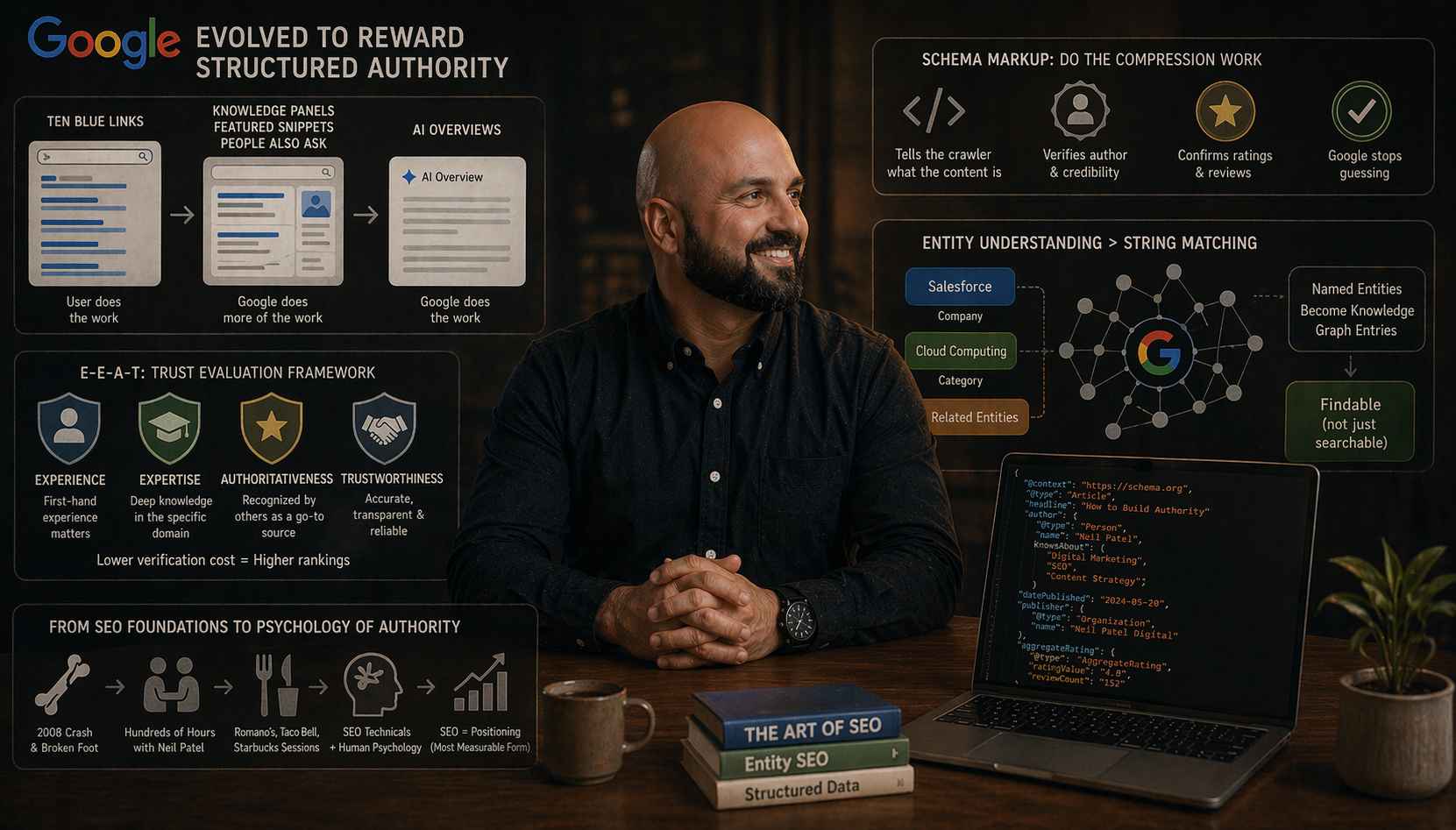

Google Search has spent two decades shifting retrieval cost from the user onto the system. Ten blue links forced users to click, scan, and evaluate. Knowledge panels, featured snippets, People Also Ask, and AI Overviews now compress that work into direct answers.

I watched this evolution from the inside. After a 2008 crash and a broken foot, I reconnected with my high school friend Neil Patel. We met for hundreds of hours at Romano's Macaroni Grill, Taco Bell, and Starbucks. Neil taught me the technical foundations of SEO. I traded back what I had absorbed at the Mike Ferry Organization and from years of Tony Robbins tapes.

The trade was psychology. Why some experts outperform technically better operators. The lesson that stuck was simple. SEO is positioning in its most measurable form.

Schema markup applies that principle at the infrastructure layer. Structured data tells a crawler the page is a recipe, the author is verified, and the rating is real. The publisher does the compression work in advance. Google stops guessing. Rankings follow.

E-E-A-T is a trust evaluation framework, not a checklist. Experience, Expertise, Authoritativeness, and Trustworthiness approximate what a human would find credible. The underlying math is energy. A source with a demonstrated track record and deep interlinked coverage lowers verification cost. A thin, unknown site raises it.

Entity understanding replaced string matching years ago. Google now knows Salesforce is a company, cloud computing is a category, and the two are related. Experts who name their frameworks, like ROAC or the Digital Concert Hall, become entries in the knowledge graph. Generic terms keep you a commodity. Named entities make you findable instead of searchable.

How AI Systems Reduce Retrieval Energy Through Structure

Large language models compress billions of documents into weighted connections between concepts. A prompt activates an association network rather than a database lookup. The cleaner the training data, the more accurate the retrieval.

Retrieval augmented generation extends that logic. The model pulls from an external knowledge base when generating answers. The cleaner the structure of that base, the fewer computational tokens the model burns finding what matters. Data quality beats data quantity every time.

AI agents now navigate the web through accessibility standards originally built for people with disabilities. The alt text that describes an image to a screen reader doubles as the alt text a browsing agent reads. HTML labels built for assistive technology are the same labels an AI uses to decide if you are worth citing.

Nikki Lam, VP of Search at NP Digital, walked me through this pattern. The conversation happened during an interview for Neil's YouTube channel. Her enterprise team calls it "search everywhere optimization." Your website is now your SEO asset, your paid asset, and your AI asset at the same time. The structured knowledge base underneath everything decides if any retrieval system finds you worth trusting.

How Experts Become Retrievable at Every Scale

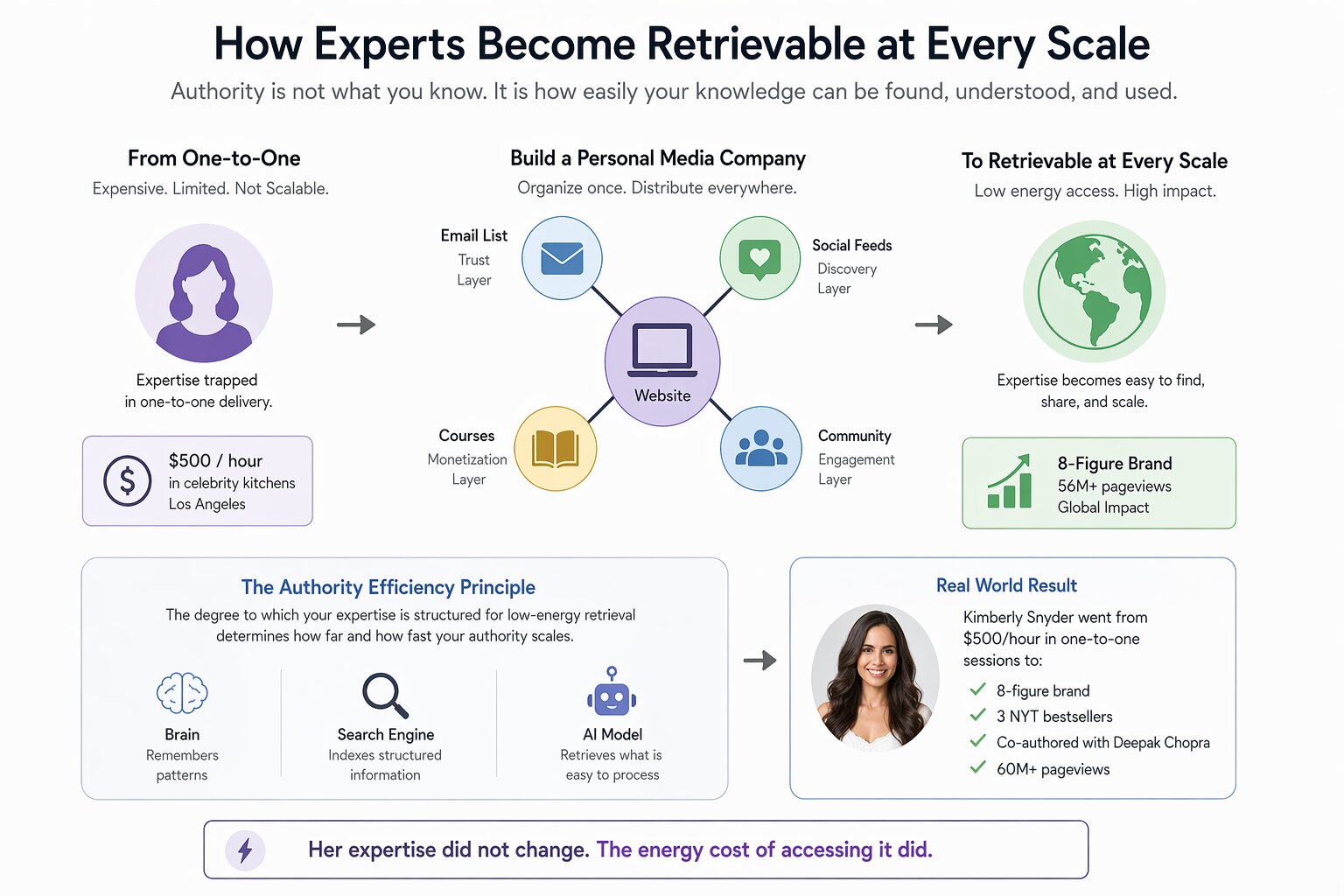

Authority in the AI era works the same way authority has always worked. Retrievability is the currency. The experts who get cited by AI, surfaced by search, and recommended by audiences share one trait. Their knowledge is organized like a knowledge graph.

I call this the Authority Efficiency Principle. The degree to which your expertise is structured for low-energy retrieval determines how far and how fast your authority scales. The substrate does not matter. A brain, a search engine, or an AI model. Same physics.

In 2012, Kimberly Snyder was a nutritionist charging 500 dollars per hour in celebrity kitchens in Los Angeles. Her knowledge could help millions. Her expertise was trapped in a one-to-one delivery model. We built her a Personal Media Company.

Website as the hub. Email list as the trust layer. Social feeds as the discovery layer. Courses as the monetization layer. The result was an 8-figure brand and over 60 million pageviews. Three New York Times bestsellers followed, including a co-author with Deepak Chopra.

Her expertise did not change. The energy cost of accessing it collapsed.

Why the Guru Ladder Maps the Same Physics

In GURU, INC., the Guru Ladder maps the progression from unknown to undeniable. Five stages: Generalist, Specialist, Authority, Guru, and Guru's Guru. Each level is a compression milestone.

A Generalist publishes scattered content across random topics. A Specialist narrows into a niche. An Authority builds deep, interlinked clusters that others follow. A Guru compresses that work into named frameworks and systems that operate without the creator present. A Guru's Guru produces an ecosystem where other leaders teach the methodology.

That ladder mirrors what happens when a website moves from thin content to topical authority. Same physics. Same outcome. The substrate does not matter. Human memory, Google's index, or an AI model's training data. The path is identical: compress, structure, and reduce the cost of retrieval.

How to Build Authority That Every System Can Read

The substrate changes every few years. The law does not. SEO was structured knowledge for crawlers. Content strategy was structured knowledge for audiences. Generative engine optimization is structured knowledge for models. The ROAC framework maps how attention passes through four gates before it converts into business outcomes.

Founders who win across all three surfaces start from the same playbook. Name your frameworks so they become retrievable entities. Build topical depth so every system reads you as the definitive source. Interlink your content so the relationships are visible to machines. Position your expertise with clarity before you scale distribution.

Authority is not a marketing strategy. It is an energy efficiency mechanism that appears at every scale of intelligence, from neurons to networks to civilizations. The experts who align with that physics own the next era. Not because they gamed an algorithm. Because they understood what the algorithm was always trying to do.

Physics does not care if you believe in it. It works anyway.

Frequently Asked Questions

What is the Authority Efficiency Principle?

The Authority Efficiency Principle is the rule that structured expertise scales faster across any retrieval system. Every system, biological or digital, rewards low-energy processing. Experts who compress their knowledge into named frameworks win across brains, Google, and AI at once.

How do AI systems decide which experts to cite?

AI systems cite sources whose knowledge is structured for efficient retrieval. That means named entities, clear HTML labels, alt text, and deep topical coverage. Language models pull from training data and external knowledge bases. Both favor content where the relationships between concepts are already mapped.

Why does naming a framework matter for SEO and AI visibility?

A named framework becomes an entity in the knowledge graph rather than a string of generic words. That entity makes you uniquely retrievable. Search engines and AI models identify and associate it with you. Generic terms leave you competing against every commodity page.

What is search everywhere optimization?

Search everywhere optimization structures a website to perform across search engines, AI assistants, and paid surfaces at once. The concept treats the website as one structured knowledge base. Every retrieval system reads it instead of optimizing for Google alone.

How is E-E-A-T different from traditional SEO signals?

E-E-A-T evaluates Experience, Expertise, Authoritativeness, and Trustworthiness by approximating what a human would find credible. Traditional SEO signals like backlinks and keyword density still matter. E-E-A-T measures how much verification cost a system must spend to trust the source. That is the deeper driver of modern rankings.

Can a small brand compete with large sites under these rules?

Small brands compete effectively when they structure expertise into named frameworks, deep topical clusters, and consistent authorship across platforms. Scale does not determine authority. Retrievability does. A focused operator can produce lower processing cost for search engines and AI than a large, unfocused publisher.

What is the fastest way to improve authority efficiency right now?

Name at least one proprietary framework. Build a topical content cluster around it with internal links. Add schema markup plus clear alt text and accessibility labels. Those three moves reduce retrieval cost for every system at once. That is what the Authority Efficiency Principle measures.